Introduction

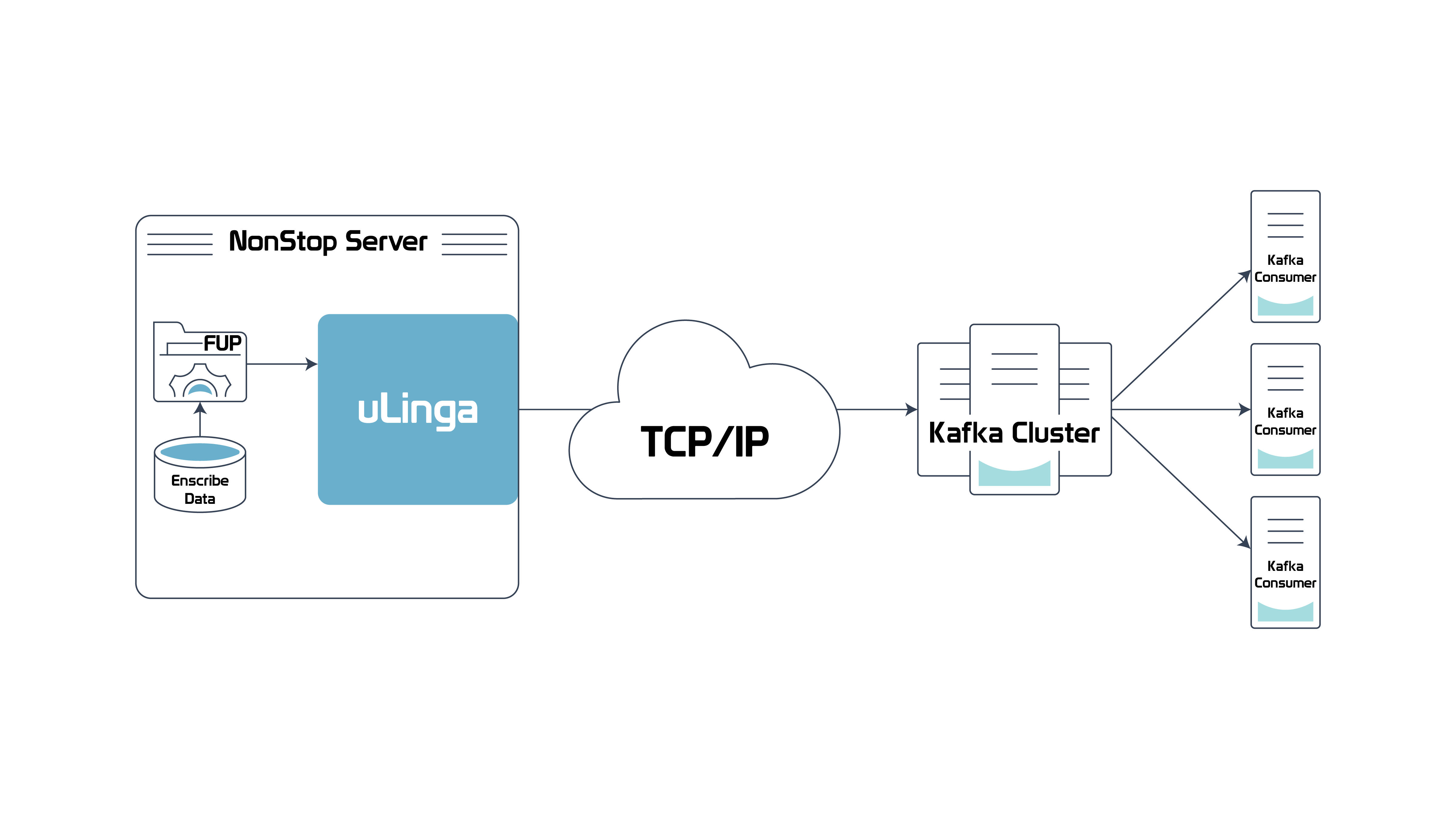

In the Sept-Oct 2021 edition of The Connection, we gave an overview of Kafka, and how it is being used by many Fortune 500 companies to manage “streams” of data, which have become prevalent as internet usage massively boosts the amount of data being generated, and requiring processing. Kafka allows these huge volumes of data to be processed in real-time, via a combination of “producers” and “consumers”, which work with a Kafka “cluster” – the main data repository.

We also introduced uLinga for Kafka, and explained how it takes a different approach to Kafka integration for NonStop applications and data.

uLinga for Kafka – Overview

uLinga for Kafka is the latest addition to Infrasoft’s uLinga product range, a solution suite that has been used by large banks, telcos, and manufacturers to provide reliable mission-critical communications infrastructure for many years. uLinga for Kafka brings the same performance, scalability, security, and manageability to the Kafka space as users have successfully utilized with the other uLinga products.

uLinga for Kafka takes a unique approach to Kafka integration: it runs as a natively compiled Guardian process pair, and supports the Kafka communications protocols directly over TCP/IP. This removes the need for Java libraries or intermediate databases, providing the best possible performance on NonStop. It also allows uLinga for Kafka to directly communicate with the Kafka cluster, getting streamed data across as quickly and reliably as possible.

Other NonStop Kafka integration solutions require an interim application and/or database, generally running on another platform. This can be less than ideal as that additional platform may not have the reliability of the NonStop, and could introduce a single point of failure. It can also increase latency, in terms of getting the data into Kafka as quickly as possible.

uLinga for Kafka – Application Integration

uLinga for Kafka also differs from other NonStop Kafka integration solutions in terms of the range of application integration options it supports. Other solutions support a CDC (Change Data Capture)-type approach, which uLinga for Kafka can also support with its FILEREADER functionality, but uLinga for Kafka goes a step further by providing a range of true application programming interfaces (APIs). These include Pathsend, Interprocess message, and HTTP.

In this article, we are going to look at a specific uLinga for Kafka use case which allows NonStop Event Message Service (EMS) messages to be quickly and efficiently streamed to Kafka.

The Challenge

The NonStop EMS subsystem handles event messages from the operating system, built-in services, and HPE third-party applications. It is usually the first port of call for operators and support personnel in the case of a NonStop system experiencing issues. From talking with our customers we’ve learned that the tendency for operators and support personnel to start up their own EMS viewers can itself impact system performance at a time where the system might already be struggling.

Kafka to the Rescue

Kafka is designed to handle massive volumes of event data such as that gathered by EMS and is an ideal way to offload event processing to reduce the impact on the NonStop server.

uLinga for Kafka can facilitate this EMS to Kafka connectivity, and quickly process huge amounts of EMS data with low latency. Once the EMS data is stored in a Kafka cluster, options for accessing those events are numerous.

The Details

uLinga for Kafka supports an IPC mechanism, allowing any NonStop application to communicate with uLinga by sending Guardian IPC messages. In the last edition of The Connection we showed how this could be used to FUP COPY any file directly to Kafka:

TACL> FUP COPY $INFRA.DATA.DATAFILE, $ULKAF.#KAFKA1

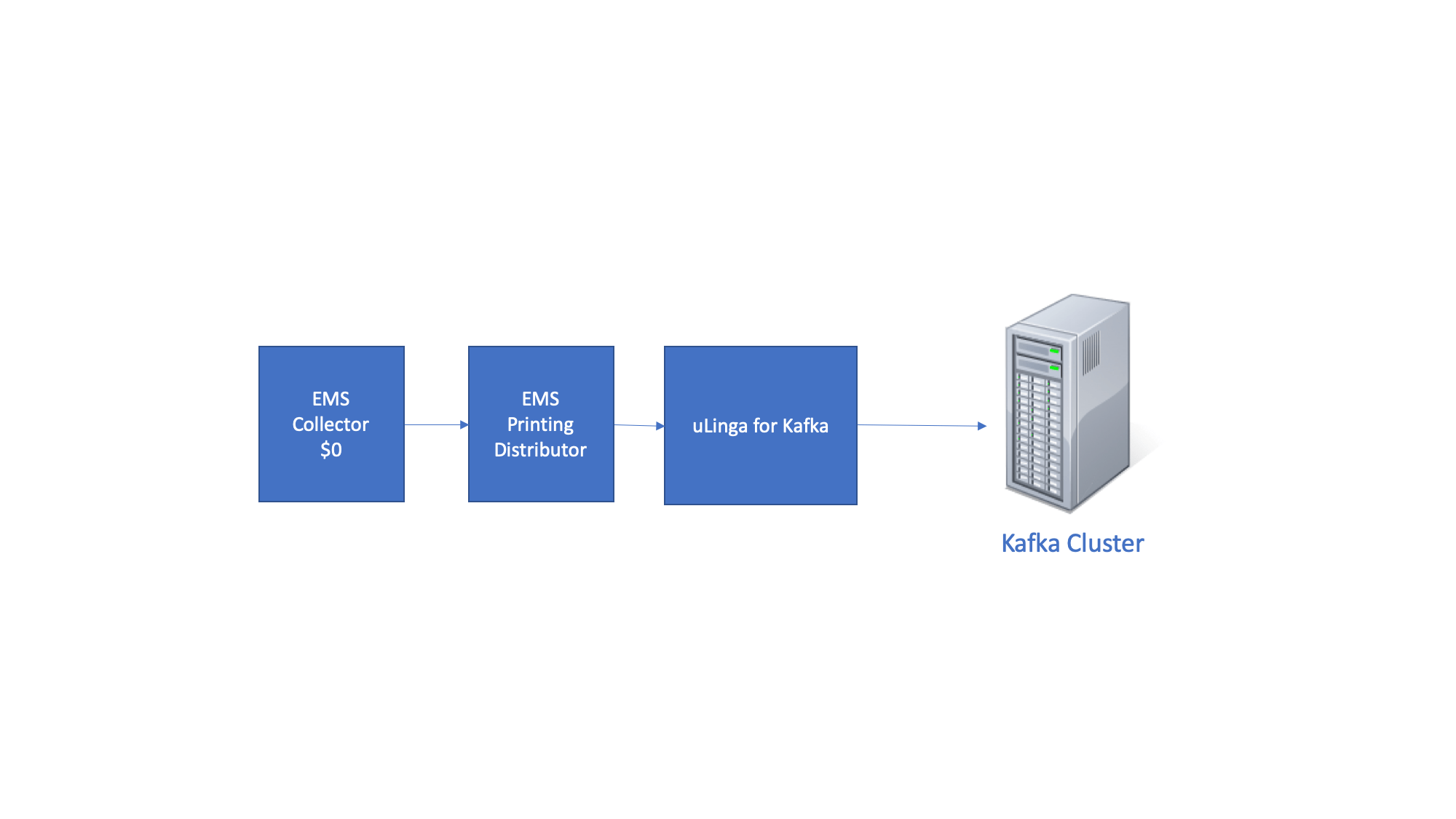

This same IPC mechanism can be used to forward EMS messages to Kafka. An EMS “printing distributor” can be started to route all traffic from an EMS collector to uLinga for Kafka, like this:

TACL> EMSDIST /NOWAIT/ COLLECTOR $0, TYPE PRINTING, TEXTOUT $ULKAF.#KAFKA1

This will allow EMS events to be sent to Kafka with extremely low (sub-second) latency.

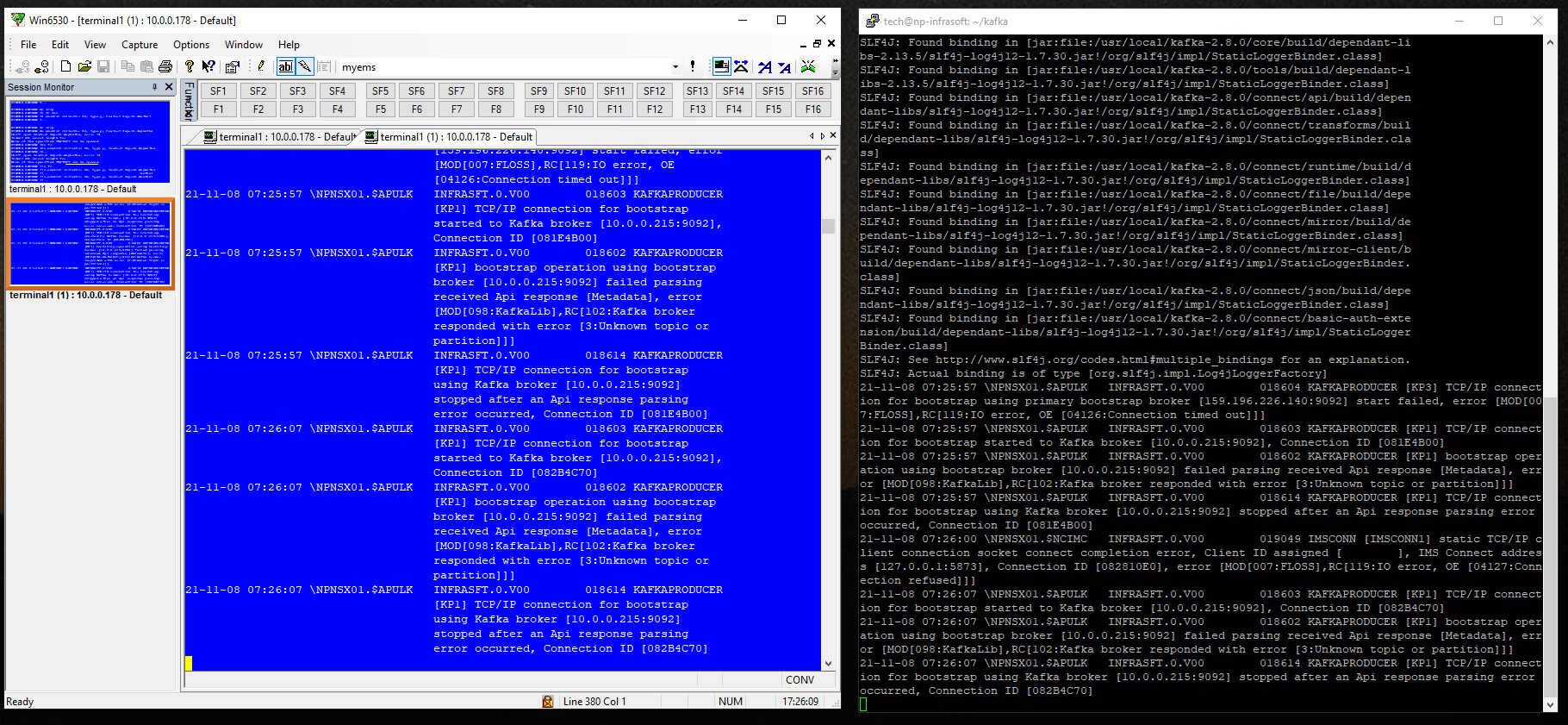

Once the events are stored in a Kafka cluster, they can be accessed in a number of ways. The simplest is to run a Kafka Console Consumer. The attached screenshots show events appearing on the NonStop and a Kafka Console Consumer in parallel.

Alternatively, users can write a Kafka consumer to access specific records, or use an off-the-shelf solution like Offset Explorer or KaDeck to filter and display messages that meet set criteria. One of our customers is trialing KaDeck’s facility to provide operators with different views showing messages that contain certain text values. That same customer plans to remove direct access to NonStop EMS collectors, as operators and support personnel will be able to get everything needed from Kafka. This reduces the processing overhead on the NonStop while giving greater flexibility to staff to access only the events that they need to see.

uLinga for Kafka is now available. Please contact [email protected] for more information or to arrange a trial.

Be the first to comment