The need to backup, replicate, and recover data has always been historically imperative; today it has become a prioritized concern across all enterprises. Over a span of four decades, storage and backup technologies have had an evolutionary progress; one that has traversed all the way from the traditional reel-to-reel tapes, through disk drives, and their virtual avatars and ultimately, to breakthrough technologies like data deduplication and object storage that has massively reduced data redundancy and overheads. Yet, data storage, being a long-term strategic investment, has made it a herculean task for CIOs to embrace newer technologies and systems, especially at a time when enterprises are witnessing unprecedented levels of data generation. Consequently, a number of these enterprises still use legacy applications that run on mission-critical servers. Nonetheless, it is important for players in the sector to reduce their ‘long-term’ data backup and archival cost by leveraging cheaper and more advanced technologies that are available today; for example Object Storage, which has the capability to accommodate Petabyte-scale data backup and long-term retention.

Modernization of data backup is necessary to focus on; there’s a wide array of new solutions that provide an up-to-date and cost effective approach to data backup, replication, protection, and recovery across all cloud and legacy platforms.

Disadvantages of Target Side Dedup Appliances

Unlike modern Purpose Built Backup Appliances (PBBAs), Target Side Disk Deduplication is an old technology. Primitive forms of deduplication were initially developed in the 1970’s, and were primarily employed to store large amounts of customer contact information without the use of large amounts of storage space. However, the use of deduplication technology really gained in popularity in the early 2000’s, when small disk capacities and steep HDD prices were the norm. Obviously, these technology driving concerns of 20 years ago are no longer the case.

While deduplication eliminates multiple copies and disk blocks from being stored, it’s necessary to perform a time consuming “full backup” to maximize the deduplication ratio. Additionally, deduplication manufacturers generally charge a premium (of 4 to 7 times regular disk storage prices for every TB of raw disk capacity) on the assumption that their product can achieve at least a 10:1 deduplication ratio (or more with highly repetitive data). However, certain customer data types are unique and will not compress or dedup. Customers with such data pay a large premium for deduplication disk hardware when compared to regular, simple disk space.

Deduplication subsystems were originally designed to replace magnetic tape or other storage methodologies, not complement them. Data portability is virtually non-existent with deduplication, it was not designed for tiering or sending data to a lower cost archival medium like tape, object storage or a public cloud (object storage using S3 protocol). The ability to move data out of the deduplication storage subsystem was added much later, as lack of this fundamental capability generated customer complaints. When data needs to be moved out of the deduplication subsystem, it must be fully re-hydrated, which is a painfully slow process and may require third party software to move and track the data as it gets written to tape. Certain manufacturers have created a process by which data can be transferred somewhat more efficiently, however this usually requires supplier lock-in, using only that particular vendor’s cloud product, many of which have repeatedly proven to be immature and suboptimal in a marketplace with much better choices. And, of course, there have been some high profile failures with these proprietary cloud products.

Finally, all deduplication disk solutions go through a process called “garbage cleaning” to redirect links to new locations as the product optimizes space on its dedup disk. This process takes from minutes to many hours depending on the size of the deduplication subsystem and the size of the backups. During this process, no backups or restores can be initiated, system is effectively down, causing end user complaints. The deduplication methodology also causes performance degradation in backup and restore when the dedup disk space is only 55 percent full.

Considerations when selecting a data backup solution

While not all inclusive, the following checklist should be reviewed when NonStop IT professionals begin the selection process for a modern PBBA solution.

- The solution must allow users to fully virtualize and automate backup and restore operations, with:

- Lights-out operation and remote administration

- Separate front and back end processing

- Superior per node performance, eliminating issues meeting customer specified backup windows

- The solution must allow users to define policies that allow flexible data storage and migration strategies:

- The ability to separate data into pools and apply different protection policies such as retention, replication, and tiering. Appropriate and automated movement of data to lower cost media such as tape, NAS or public cloud for archival is key to the cost effectiveness of any backup strategy

- Provide the functionality to perform real-time backups while concurrently and transparently migrating older data from legacy tape or proprietary dedup storage to cloud object storage solutions

- Allow consolidation of all user storage subsystems for simplified backup/restore operations

- The solution must be secure, incorporating built in encryption algorithms while fully supporting Information Dispersal Algorithm (IDA) or Erasure Coding. The security features provided by public cloud vendors such as AWS, Google and Azure should be leveraged.

- The solution must be expandable and scalable for capacity and performance

- The solution must be simple to use, incorporating an intuitive, easy-to-use GUI for system configuration, administration and daily operations

- The solution must allow deployment in open, heterogeneous multi-platform environments:

- Such as Windows, Linux, UNIX (AIX, HPUX, Solaris), IBM iSeries (AS/400), HPE NonStop (Tandem), HPE VMS, and VMware® among others

- All major backup management applications such as Spectrum Protect/TSM, NetBackup®, Veeam, Commvault Simpana®, BRMS (iSeries) and ABS/MDMS (OpenVMS) must be supported

- The solution must be transparent to users and existing applications

- Installation on non-proprietary, less expensive, hardware is mandatory to avoid “supplier lock-in”.

- The solution should eliminate the requirement for personnel to be on-site for manual tape operations, such as loading and unloading cartridges. This consideration takes on increased importance given the current global pandemic.

Enter Tributary Systems’ Storage Director

This checklist sounds like an unattainable dream… Does such a PBBA even exist? And for NonStop Servers? The answer is yes: Tributary Systems’ Storage Director!

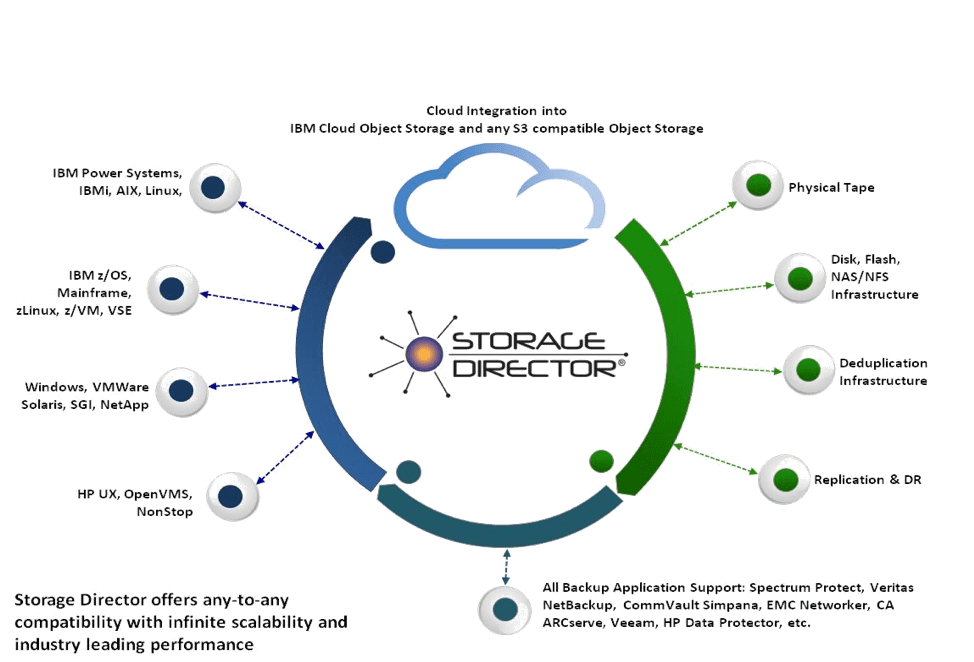

Storage Director is the industry leading software defined, policy-based and tiered enterprise backup virtualization solution that enables data from any host, OS or backup application, to be backed up to any storage device, medium or technology. For Hybrid Multi-Cloud-based backup, Storage Director interfaces with IBM’s Cloud Object Storage, Hitachi’s HCP, or any S3 compatible cloud.

For any given customer environment, Storage Director can be configured to offer the optimal continuous availability backup, replication and DR solution which results in the lowest cost, highest performance and reliability backup solution. Storage Director is available in configurations from 10 TB to multiple petabytes, providing appropriate capacity for all enterprises. The built-in methodology Storage Director uses for data reduction is hardware enabled LZ compression with redundant secondary software based compression and AES 256 bit encryption coupled with WAN optimization and latency management for replication.

Being a fully virtualized target for any backup management application, such as Spectrum Protect, NetBackup, Commvault, Veeam, etc., Storage Director enables policy-based data pools to be created and backed up to multiple targets and replicated for remote storage and DR, securely and without host intervention. Concurrent with HPE NonStop backups, Storage Director is able to present a single backup target or solution for backing up all open environments, such as Linux, Windows, VMWare, UNIX, etc. as well as other proprietary host platforms, such as IBM Mainframe (z/OS), iSeries, HP Open VMS etc. on a single node

Simply put, Storage Director is the most straightforward and best way to connect any NonStop server to any storage device or the Cloud (Public or Private). Storage Director provides for secure and high-performance Backup/ Restore operations at the lowest cost.

Additional information on Storage Director is available at www.tributary.com, or call Matt Allen at 713-492-7434

Storage Director allows NonStop professionals to “augment what they have and use it in creative new ways!”

Be the first to comment